Nudging the public into censorship: The effect of default opt-in on decision making

Ben Meghreblian looks at how research into decision making can cast light on the government encouraging Internet users to have their Internet connections filtered by default.

Image: Internet censorship in Slovak by opensourceway CC BY-SA 2.0

Last year the Government decided that it wanted ISPs to “actively encourage parents...to switch on parental controls”. Two weeks ago the Department for Department for Culture, Media & Sport released a policy paper in which they said:

“Where children could be accessing the internet, we need good filters that are preselected to be on, and we need parents aware and engaged in the setting of those filters.” (pp. 36)

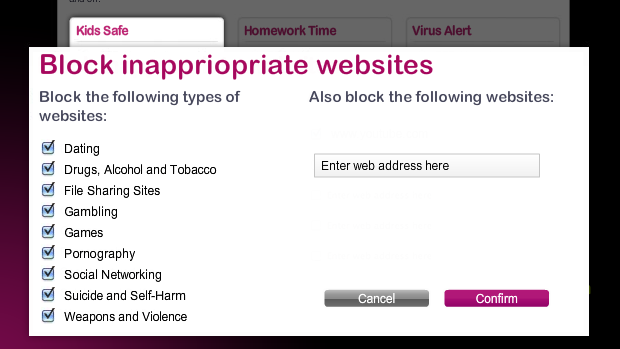

Deploying a configuration screen with one or more options pre-selected raises questions regarding how ‘free’ a choice this is, how considered users decisions will be, and how many will choose the alternative (unfiltered) option. The screen describing the filtering categories might look something like this:

We can shine light on this issue from research in many fields including psychology and economics, where choosing the default option is known as ‘status quo bias’ and regularly affects decision making. In policy-making circles, an awareness of how to use defaults to affect decision-making has been called ‘nudge’ - Sunstein & Thaler say this can be used to:

“...attempt to steer people's choices in welfare-promoting directions without eliminating freedom of choice”

How welfare-promoting these proposed Internet filters are, and how free peoples’ choices will be are the key issues.

N.B. Interested readers may wish to read ’Nudge and the Manipulation of Choice: A Framework for the Responsible Use of the Nudge Approach to Behaviour Change in Public Policy’

Status-quo bias and defaults in brief

Status quo bias can be explained as a cognitive bias towards the current state of affairs. From a rational perspective, when individuals are making decisions about various alternatives, only the preference-relevant features should influence their decisions (for example prospective car buyers choosing from a set of colours for their new car). But in reality, when a choice is labelled as the status quo it influences decision-making above what would be predicted.

A choice can be labelled as the status-quo or default in two main ways:

- Pre-selecting an option as the default

-

Referring to statistics regarding past majority choice

As discussed in the examples below, framing an option as the default greatly increases the chances that that option will be chosen, even when the option assigned to be pre-selected is random. This knowledge, coupled with a policy-maker’s agenda, could lead to a situation where a person is ‘nudged’ into making a particular decision. As Mandl and colleagues warn:

“A major risk of defaults is that they could be exploited for misleading users and making them to choose options that are not really needed to fulfill their requirements.” (pp. 19)

Examples from research - how defaults affect decision making:

Default choice

Perhaps the most well known example of default opt-in is that of organ donation. Countries who employ a default opt-in (presumed consent) scheme see average rates of 97.56% compared to countries who employ a default opt-out (explicit consent), where the average rate is 22.73%.

A similar pattern of behaviour can be seen in decisions relating to car insurance, healthcare plans, and internet privacy policies, where both defaults and the linguistic framing of options affect opt-in rates.

Interestingly, research has demonstrated that if people are given the option to delay making a decision, those who accept the delay (even 15mins) are less likely to choose the default.

Active choice

In comparison, giving people an active choice (nothing pre-selected as default) can lead to more beneficial outcomes. An active choice offers a seemingly free choice and appears to lead to decision outcomes which may be quantitatively and/or qualitatively desirable.

For instance, in one study 28% more employees chose to participate in a 401(k) savings scheme when they were given an active choice about whether or not to participate (compared to when the default was not to participate).

Interestingly, researchers have recently developed a concept called ‘enhanced active choice’ (EAC) which retains the concept of active choice, but adds language to certain options to make their associated risks more obvious. When testing out this theory, the researchers found that 75% (EAC) vs. 62% (active choice) agreed to get a flu shot. This concept was applied to healthcare, where the researchers argue that presenting patients with a default choice is likely unethical.

N.B. Interested readers may wish to read a ’Salvaging the concept of nudge’ which offers a defence of nudge in healthcare contexts.

Why people choose the default option

There tend to be three reasons why people choose the default option:

-

The default is seen as a suggestion/recommendation by the policy-maker. Additionally, if an expert opinion is sought and their bias is in the direction of the status quo, the decision is almost certainly to also be in that direction.

-

Making a decision involves effort, whereas accepting the default is effortless. Effort can be physical (filling forms, telephone, posting) or mental (weighing up alternatives).

-

Defaults often represent the existing state or status quo, and change usually represents a trade-off. There may be perceived risks associated with change, and people are loss averse (they experience losses more than equivalent gains).

What might this tell us about filtering choices?

Pre-selected

- Given the above evidence regarding default choices combined with the Government position, ‘asking’ (under threat of legislation) ISPs to implement default blocking, it seems clear that a large number of users will be nudged into choosing a filtered internet connection.

- Actual numbers are hard to predict and partially dependent on the actual design of the decision screen, but if we look at some comparable studies uptake may be as high as 89% (almost 9/10) of users being nudged into filtering their internet connection.

- In the case of parents this number may be even higher, dependent on the language used to describe the filters (see below).

Framing filters as ‘parental controls’

- For parents configuring their internet filter, if the filter is framed as a ‘parental control’ or suchlike which reminds them of their additional role (i.e. not just an internet user, but also a parent) it is likely to lead to greater risk aversion and therefore an increased choice of the filtered connection.

- Counterintuitively, this effect may also affect those adults who are not parents, but who well understand a parent’s role, although it could also be argued that such framing will clarify the intended target for the controls, leading to non-parent adults to infer that the filtered option is not designed for them.

- The psychological theory which speaks to this is called ‘priming’, where a person’s behaviour can be influenced by exposure to prior stimuli. Examples include priming people with words around a certain theme (e.g. happy/sad), describing or exposing them to certain behaviour (e.g. risky/careful), and reminding them of possible roles they could perform/parts of their selves (e.g. parent/daughter).

Different linguistic framing

- As mentioned in the examples above, linguistic framing of a problem and additional explanatory text can affect decision making. For example, with a default opt-in condition, phrasing in the positive or negative has been shown to affect users’ decisions

- If we assume the ISPs will use a pre-selected Yes/No choice, a comparable study design suggests that the ISPs could expect an uptake of up to 89.2% e.g:

![]()

- However, the ISPs could potentially attain an even higher opt-in rate (up to (96.3%) with a single unticked checkbox:

☐ Do NOT filter my internet connection

Closing thoughts

There seems to be a contradiction in what the Government wants. On the one hand it wants “...filters that are preselected to be on...”, and on the other hand it also wants “...parents aware and engaged in the setting of those filters”. But from what the research tells us about decision-making, these two desires seem to work against each other.

We know that defaults will cause more people to turn on their filters. But deploying defaults also will likely reduce how carefully people consider their options and engage with the issues. When launching this new policy, David Cameron said “One click to protect your whole home and to keep your children safe”, but this arguably encourages a ‘click and forget’ approach to parenting.

Although in the Prime Minister’s speech and in the Government’s earlier response, references are rightly made to education and the role of ‘digital parenting’, surprisingly there appears to be no mention of these issues in the proposed configuration screen. Given the importance of the decision, surely this an ideal place to signpost these issues. For example, why not include messages on the screen that will:

-

engage people in the complexities of the arguments e.g. pros and cons, how (overly) successful the blocking is

-

encourage those who are parents to consider the issues, start a discussion with their children about online safety, and empower them to manage risk

-

inform parents of the other options available to them such as device-based filtering

To quote Professor Tanya Byron, the clinical psychologist who authored one of the expert reviews commissioned by the Government over the past couple of years:

“Children and young people need to be empowered to keep themselves safe – this isn’t just about a top-down approach. Children will be children – pushing boundaries and taking risks. At a public swimming pool we have gates, put up signs, have lifeguards andshallow ends, but we also teach children how to swim”

N.B. Last week the Government released proposed amendments to the Consumer Protection Regulations (Unfair Trading) that would “ban pre-ticked tick boxes for extras that the consumer may not want or need”.

Ben Meghreblian has a background in psychology & IT and is currently working on a report for the Open Rights Group relating to internet filtering. His interests include psychology, human rights, technology, and science-related topics. He tweets @benmeg

Tags

Share this article

Comments

Latest Articles

Featured Article

Schmidt Happens

Wendy M. Grossman responds to "loopy" statements made by Google Executive Chairman Eric Schmidt in regards to censorship and encryption.

ORGZine: the Digital Rights magazine written for and by Open Rights Group supporters and engaged experts expressing their personal views

People who have written us are: campaigners, inventors, legal professionals , artists, writers, curators and publishers, technology experts, volunteers, think tanks, MPs, journalists and ORG supporters.

Comments (0)